Building AI agents has become the holy grail of modern AI software development, with everyone from startups to tech giants eager to harness the power of communities of AI agents to automate complex tasks. However, as exciting as the prospect of creating autonomous, intelligent systems may be, the reality is that getting them to work reliably is incredibly hard. In fact, even deciding on the right abstraction or agent library to use can be a daunting task. There are a several popular options (CrewAI, Autogen, LangGraph, …), each with its own strengths and weaknesses, and new libraries popping up everyday.

OpenAI shared a new swarm framework recently for building agent communities or swarms. I’ve written earlier about elegant LLM software abstractions that the OpenAI team has come up with, right from the completion API to the functions/tools. It has been fun to watch how they cut through complexity at each stage of the API evolution and prioritized elegant simplicity. With the swarm release, I got very curious about the design choices and trade-offs they made with swarm.

Aside: In this article, I’ll focus on the core software abstractions for building Agents seamlessly. There are many other interesting questions around Agents: When do you really need Agents? Build monolithic vs modular Agents? How hard is it to build reliable Agents / Swarms? To address them, I will either expand the scope of this article or write companion articles later.

OpenAI Swarm

Let’s dive into the swarm library design. The key components:

An Agent class with instructions (what are the top-level goals of the Agent), functions (tools the agent can take help from) parameters, and an LLM model that it relies on. Agents are entirely stateless.

Agent (internal) executor: on the user input, it observes the past conversation (system state), decides which tool to use, invokes the tools and appends result to the conversation. If no tool relevant, provides a direct response and stops.

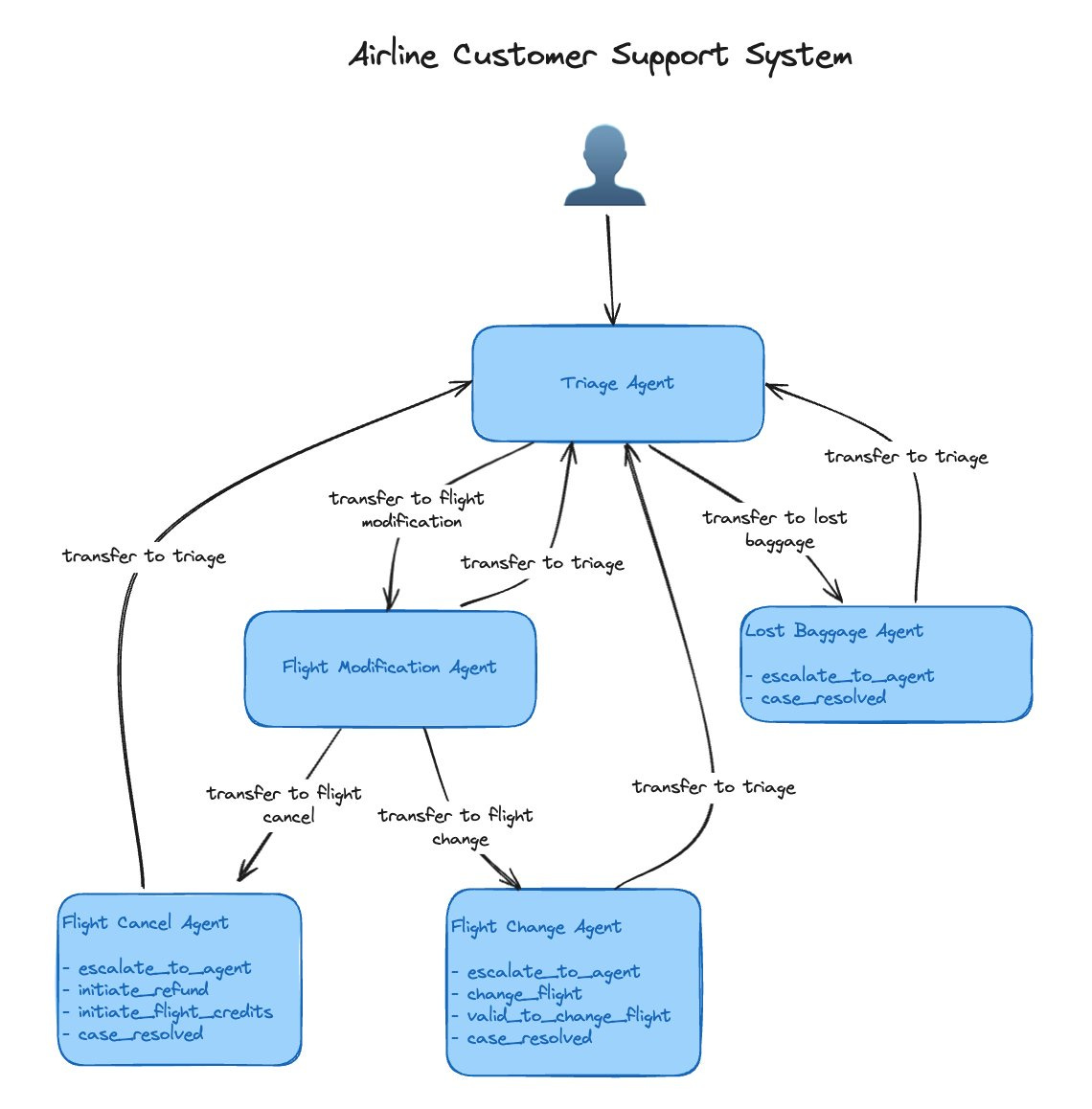

The key distinguishing construct of swarm is that of transfer of control (handoff) to another agent. After finishing its task, an agent can transfer control to another agent. This essentially creates a network of agents, executing sequentially, tied by internal routers.

The coolest part is that transfer is implemented elegantly as yet another tool (a function that returns the target agent). Here’s a quick code illustration.

from swarm import Swarm, Agent

client = Swarm()

def transfer_to_agent_b():

return agent_b

agent_a = Agent(

name="Agent A",

instructions="You are a helpful agent.",

functions=[transfer_to_agent_b],

)

agent_b = Agent(

name="Agent B",

instructions="Only speak in Haikus.",

)

response = client.run(

agent=agent_a,

messages=[{"role": "user", "content": "I want to talk to agent B."}],

)

print(response.messages[-1]["content"])Output:

Hope glimmers brightly,

New paths converge gracefully,

What can I assist?A transfer_to_agent_b tool simply returns a reference to AgentB; on executing transfer_to_agent_b, control moves to the code of AgentB from current AgentA.

Note that with this design, the thread of control moves sequentially from one agent to another in the connected network; at any time, control is at a single agent.

Transfer of control between agents might appear like calling a function F in conventional programming paradigm, i.e., when main calls F, control moves from main to F. The key difference is that, in conventional programming, control must always go back to main. However, in swarm Agents, control can meander around among agents (like messages being passed), without a pre-determined flow. This enables encoding complex behaviors.

Another interesting aspect of this design is that each Agent (because it encapsulates an LLM) is capable of responding back to the user and carrying forward the conversation, while executing its task. We don't need a dedicated user-facing agent.

For parallelism, one can setup multiple networks of agents, when we really need it. More on this later.

📜 OpenAI provides a detailed cookbook illustrating the internals of the Agent executor, with function calls including handoff (a nice diagram here).. Thanks to using only completion and tool calls, the Agents are entirely stateless.

Swarm, in contrast to other frameworks:

No explicit construct for Task/Flow/Routine. Crewai, for example, needs you to define explicit Tasks which are allocated to Agents. Similarly, Autogen.

Swarm lacks a separate Task class or a explicit definition of what constitutes a Task. A Task is defined implicitly by control flow/transfer among Agents. As a nice consequence, a single Agent can be a part of multiple Tasks.

In other frameworks, I've often felt that the Agent and Task definitions seem to be duplicating effort, with both serving similar purpose. So not having a Task class or defining Tasks implicitly via network of Agents is a welcome change.

All state shared among different agents. Global sharing, no message passing among agents. Lot of flexibility here, but easy to shoot oneself in the foot too.

Agent definitions also link to the high-level notion of conversation flow, which simplifies conversation implementation using Agents. In a perfectly modular system, each agent would be tailored to handle a specific conversation flow, with minimal overlap or redundancy.

Tip: To use general LLM backends with swarm, use the open-swarm library.

Tip: In many cases, a simple handoff by returning the new Agent variable may not be enough. Solution: use generalized handoff using a special Result datatype.

To wrap up, I find it very elegant that by introducing the agent handoff construct, the swarm designers managed to keep programming Agents simple (only new Agent class, no Task; no explicit message passing, all memory global) and yet retain the ability to define complex Agents.

The cherry on the cake is that agent-transfer is just another tool, defined via the core functions construct. Agents are stateless and mirror conversation flows, which makes the full conversational system design quite convenient.

The bigger picture: The principles of swarm intelligence are used in a wide range of applications, including optimization, machine learning, robotics, and network design. They manifest as decentralized, self-organized systems, where the individual agents interact and cooperate to achieve a common goal. The swarm library lets us design Agent networks adhering to these principles. I'm excited to see how creators will leverage this foundation to build adaptive systems, particularly with embodied agents.