I Started a New Substack. Here’s What I’ve Been Writing.

Agent Engineering In Practice

If you’ve been reading the OffNote Labs Newsletter for a while, you know I like to get under the hood of AI — into how things actually work, from first principles.

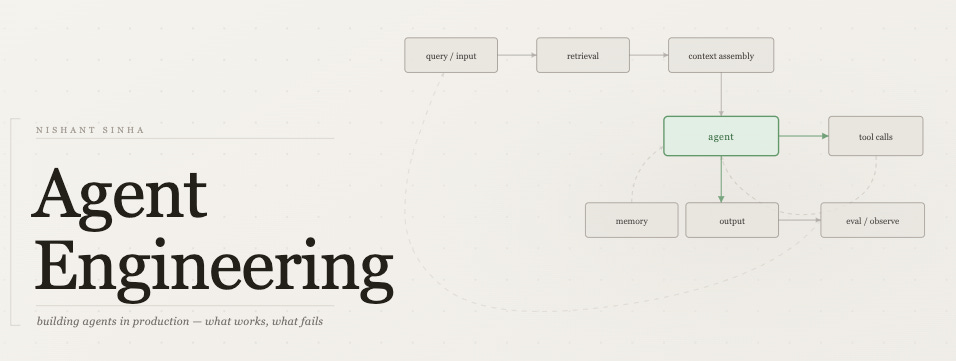

A few weeks ago I started a new publication called Agent Engineering — a space dedicated to one question: what does it really take to build robust AI agents in production? The assumptions you make, the things that break, and the thinking that holds up.

The OffNote Labs newsletter isn’t going anywhere — Agent Engineering is just a space to go deeper on one specific area.

I've been writing more frequently over there — would love to have you along if the theme resonates.

Here’s a taste of what I’ve been exploring.

1. Vector Search Is a Crude Proxy for Relevance

Vector search is useful, but it’s often asked to do more than it can. Embeddings capture some aspects of a query-document relationship — syntax, semantics — but they miss a lot: hidden intent, domain nuance, tradeoffs the query never states explicitly. I wrote about where this assumption breaks down, and what approaches hold up better when you actually need to judge relevance rather than just retrieve candidates.

2. Two Key Practices to Build Agents Safely in Production

There’s a lot written about building agents, not much about running them over time without things quietly going wrong. I’ve been thinking about two practices that seem to matter most once you’re past the demo stage — making runs observable enough that failures aren’t surprises, and building a way to change prompts with some confidence rather than hoping for the best.

3. AI Isn’t About Models. It’s About Distribution.

Every week there’s a new model release. But how a model actually reaches users — and which ones — has more to do with the distribution layer than the benchmark. I mapped out the three layers this plays out across: infra gateways, developer tools, and user-facing apps. Useful framing if you’re trying to understand where things are actually heading.

4. End of Era for Python?

Wes McKinney made an argument recently that agents will gradually eat away at Python’s data science dominance — with Go and Rust filling in from the bottom as stacks get re-engineered around how agents write and reason about code. I’m not sure I fully agree, but I found it worth thinking through seriously rather than dismissing.

5. Stuck in Eval Loop? Look for a Better Abstraction

A pattern I keep seeing: teams invest heavily in making their evals more thorough, catch more failures, patch more edge cases — and still find themselves going in circles. The question that often goes unasked is whether the system keeps failing because the abstraction underneath is the wrong one. I wrote about what that looks like in practice, with a concrete example where moving up one layer of abstraction made a surprisingly large difference.

Come Read Along

I started Agent Engineering because I kept running into the same gap — a lot of excitement about what AI agents could do, but not enough honest writing about what it actually takes to build them well.

If you’re in the weeds with AI, or you’re about to be, I’d love to have you there. It’s free, it’s candid, and it gets into the details most newsletters skip.